Tuning & Learning

Fine-Tune Models on Your Domain Data

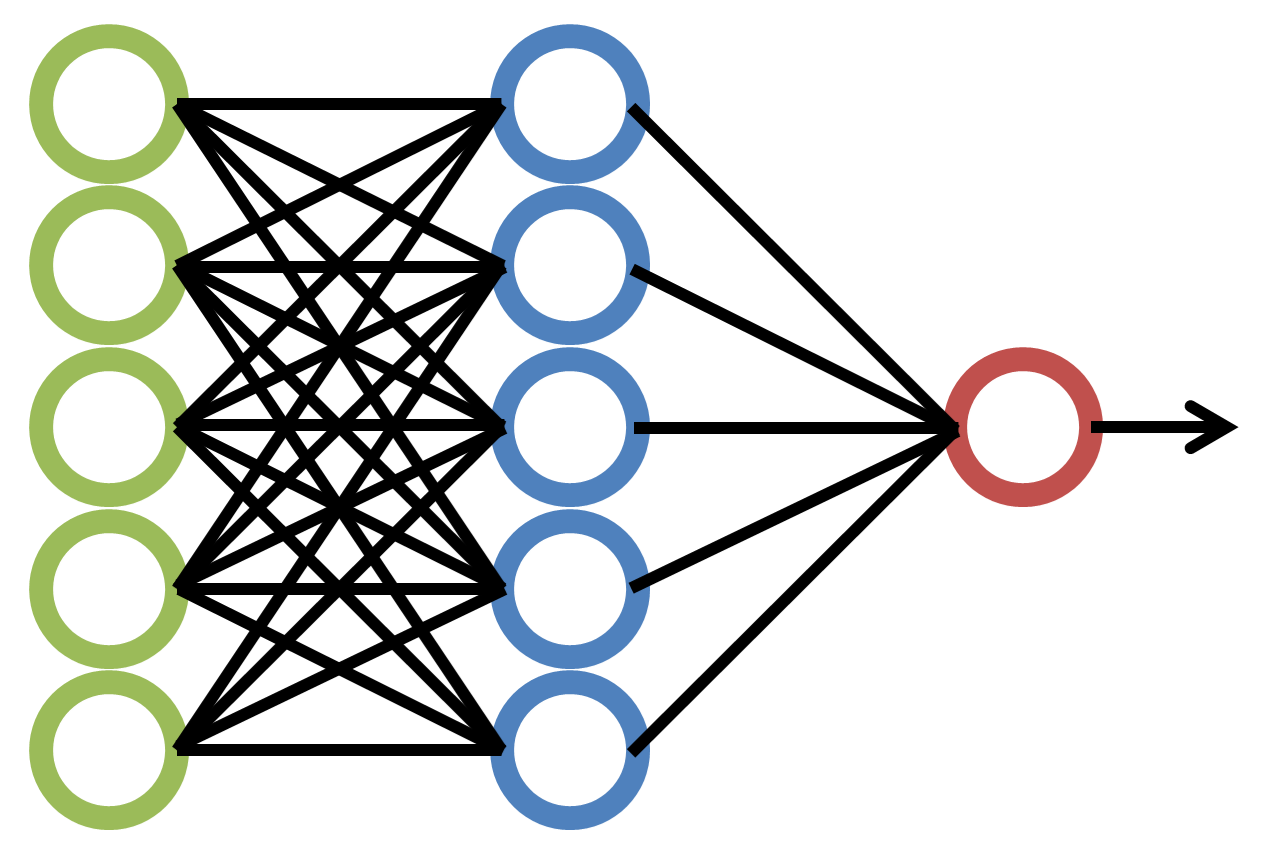

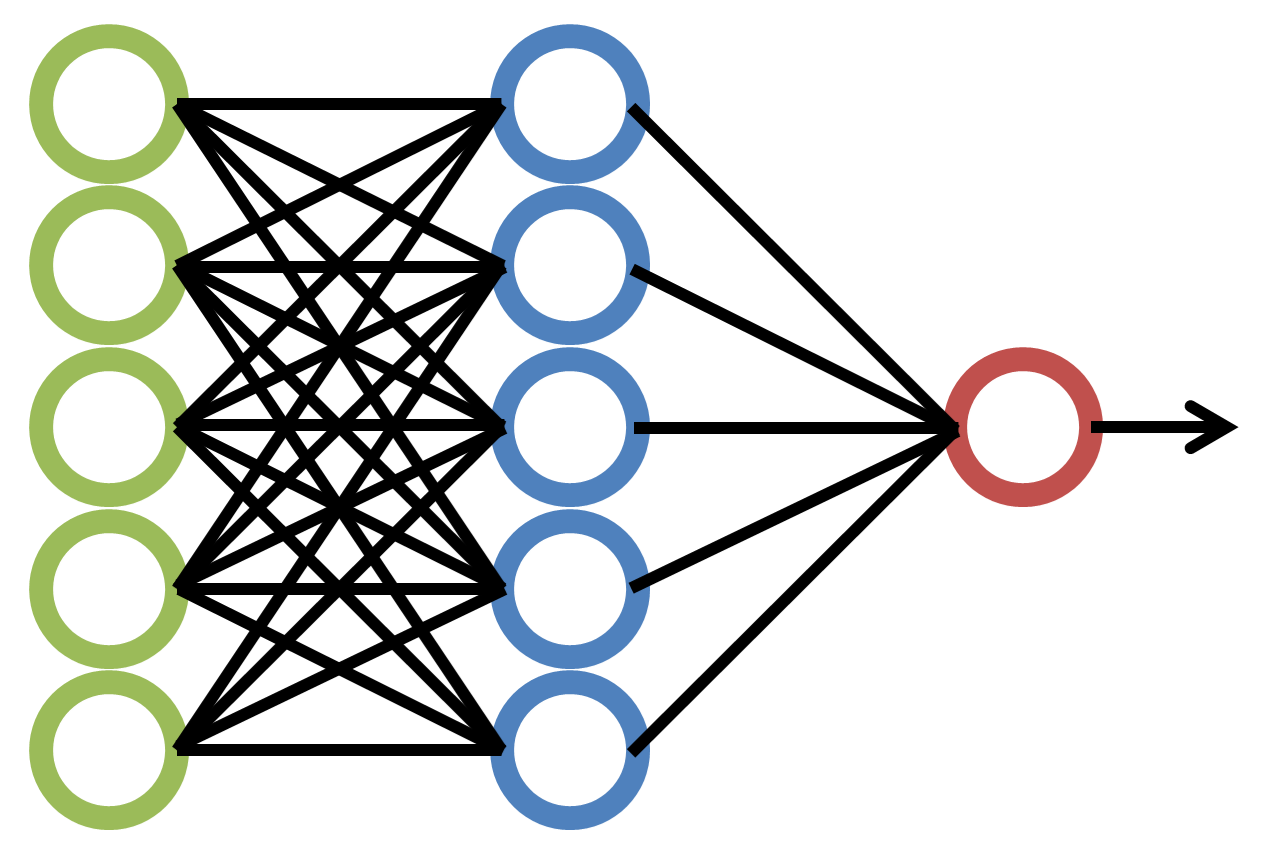

Tuning & Learning transforms general-purpose language models into domain specialists. Using LoRA (Low-Rank Adaptation) fine-tuning and knowledge distillation, we adapt 72B parameter models to understand your industry's terminology, reasoning patterns, and quality standards — without retraining from scratch.

Domain Specialisation

A 72B parameter model knows a lot about everything. But your business needs a model that knows a lot about your specific things. Tuning & Learning bridges that gap through parameter-efficient fine-tuning that preserves the model's general intelligence while adding deep domain expertise.

Tuning Methods

- LoRA Fine-Tuning: Low-rank adaptation that modifies <2% of model parameters while achieving domain-specific performance gains of 15-40%

- Knowledge Distillation: Train smaller, faster models (27B, 8B) that inherit the reasoning patterns of larger models on your specific use cases

- Reinforcement from Feedback: Incorporate human expert feedback to align model outputs with your quality standards

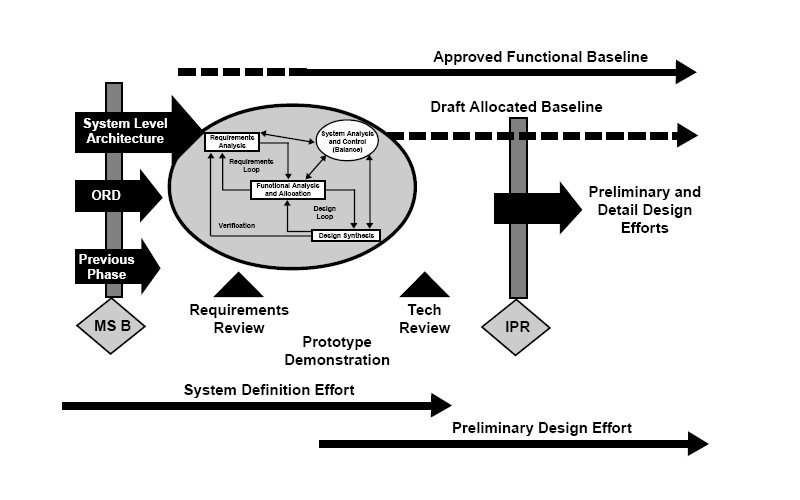

The Training Pipeline

- Data Preparation: Curate training examples from your documents with our annotation tools

- Baseline Evaluation: Measure the untuned model against your specific use cases

- Fine-Tuning: Apply LoRA adapters on your dedicated GPU infrastructure

- Evaluation: A/B test tuned vs. base model on held-out examples

- Deployment: Deploy the tuned model to your CorpusAI instance

Technical Details

- LoRA fine-tuning with <2% parameter modification

- 15-40% domain-specific performance improvement

- Knowledge distillation from 72B to 27B/8B models

- All training runs on your dedicated GPU infrastructure

- No training data leaves your jurisdiction

- A/B evaluation framework included

Services for Tuning & Learning

Related Products

CaveauCRM

Unified CRM platform connecting SuiteCRM, FOSSbilling, Baikal CalDAV, n8n workflows, and caveauAI into a single automated, AI-powered business hub — fully open source, EU-hosted, GDPR compliant.

Learn more

caveauAI

Upload thousands of documents and get citation-backed answers in seconds. caveauAI runs 72B parameter models on dedicated GPUs you control — no data leaves your controlled infrastructure, ever.

Learn more

The Knowledge Exchange

Package your domain knowledge into a secure AI corpus. We host the GPU and the RAG engine. You set the price. You keep 80% of the revenue. Build, curate, and publish knowledge packages for the Knowledge Exchange.

Learn moreReady to Put This to Work?

Bring the documents, the workflow, or the integration question. We will tell you whether Tuning & Learning is enough on its own or needs a broader Blue Note Logic rollout.

Get in Touch