Model Tuning & Distillation

Adapt foundation models to your domain expertise

Model Tuning & Distillation is the implementation service behind the Tuning & Learning product. Our ML engineers work with your domain experts to prepare training data, run fine-tuning experiments, evaluate results, and deploy adapted models to your caveauAI infrastructure.

Domain Model Specialisation

Fine-tuning a language model is not just a technical exercise — it is a collaboration between ML engineers and domain experts. The engineers know how to adjust model parameters efficiently. The domain experts know what a correct answer looks like. Model Tuning & Distillation brings both together in a structured process.

Service Phases

- Domain Analysis: Understand your terminology, reasoning patterns, and quality standards

- Data Curation: Prepare training examples with your team using our annotation tools

- Experimentation: Run multiple fine-tuning configurations and evaluate against baselines

- Distillation: Create smaller, faster models that retain domain-specific performance

- Deployment: Integrate tuned models into your CorpusAI instance

- Monitoring: Track model performance and schedule periodic retuning

Tuning Methodology

- LoRA parameter-efficient fine-tuning

- Progressive knowledge distillation

- A/B evaluation with domain expert judges

- Continuous performance monitoring

- Periodic retuning schedule

Related Services

Corporate Memory Extraction & Sovereign Model Tuning

We embed a private RAG engine into your organisation. Your team uses it to search contracts, case law, and internal documents. Every interaction generates verified training data. After 10,000+ interactions, we distill that data into a sovereign AI model — smaller, faster, cheaper, and entirely yours.

Learn more

Document Intelligence Consulting

We help organisations design, deploy, and optimise caveauAI implementations — from corpus architecture to embedding strategy to production deployment.

Learn more

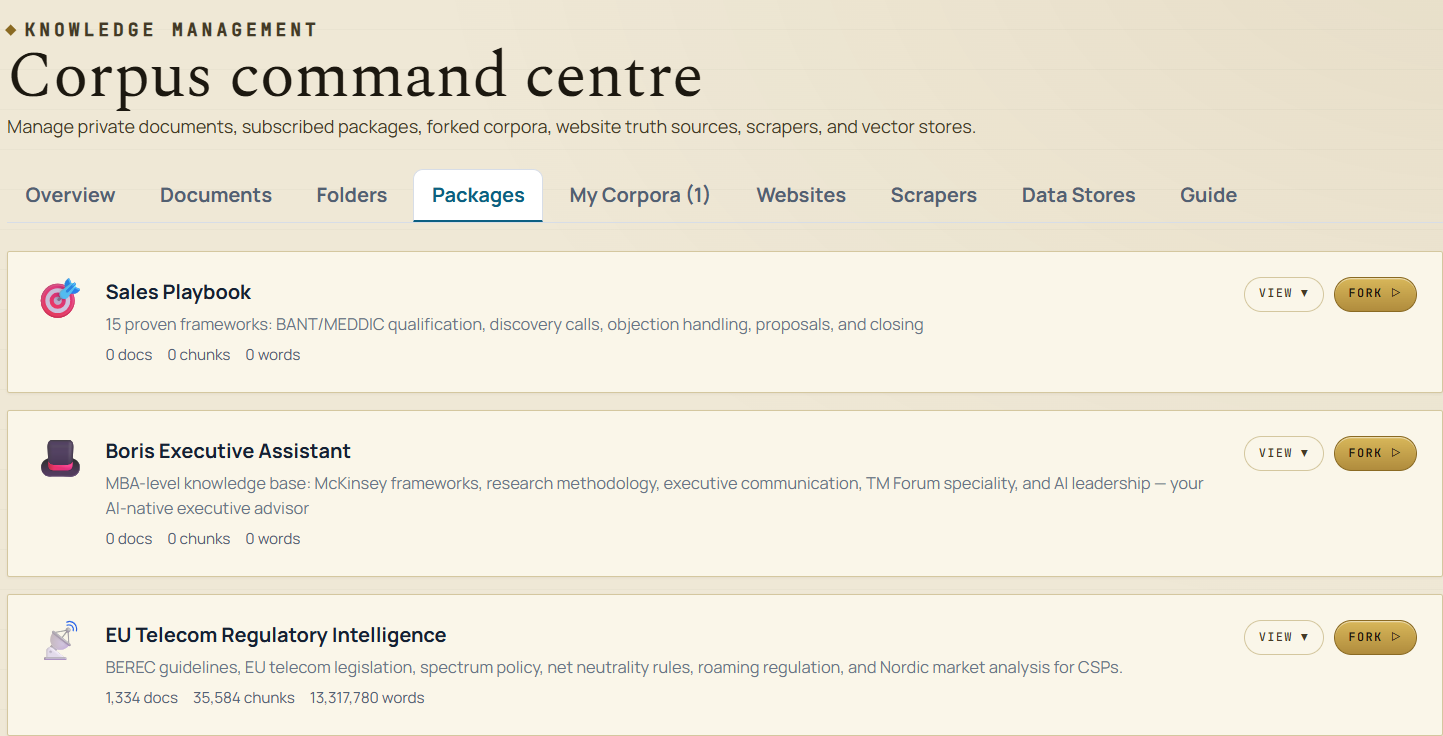

Knowledge Corpus Development

We help domain experts and organisations transform raw document collections into production-grade knowledge packages — structured, categorised, and optimised for AI-powered search. 80/20 revenue split in favour of the creator.

Learn moreReady to Turn This Into a Live Programme?

We can scope the delivery model, identify the right team shape, and outline the fastest practical path forward.

Start the Conversation